* Note: Trackingplan's Regression Testing feature has been deprecated and is no longer available in Trackingplan. This content is kept for informational purposes to explain how event and data consistency could be validated in regression tests. For current solutions on data quality and event monitoring, please refer to trackingplan.com.

How tracking can become a first-class citizen in your QA

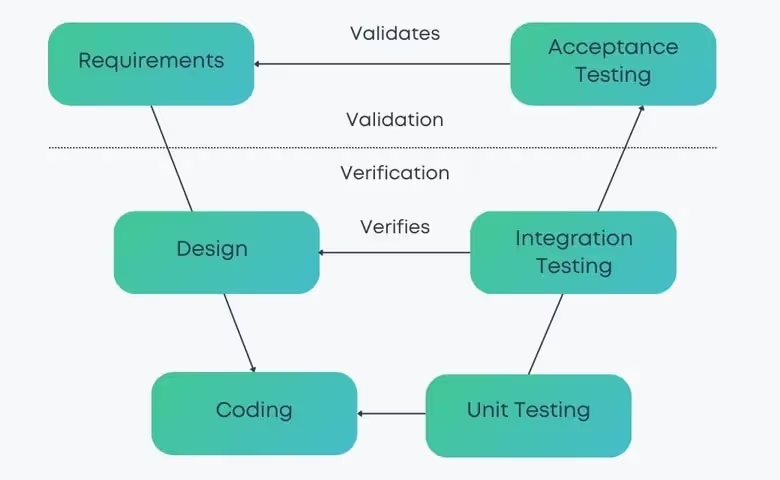

The impact of a bug in software Production may be measured by the number of users affected by it, the span of time they had to suffer from it, or the financial losses incurred because of it. Such consequences can be reduced significantly by finding bugs earlier though, either out in the field or internally during development. Because of this, applying Quality Assurance (QA) throughout the development process and software lifecycle, such as manual testing, automated code testing, or code reviews, has successfully become the standard in the industry in the last two decades.

As was proposed by Larry Smith in shift-left testing, we have learned that introducing additional quality control steps speeds up our net productivity. Detecting bugs earlier reduces both their impact and the cost of fixing them. And investing in automation through CI/CD has made it possible to actually run such pipelines efficiently.

Analytics and user tracking implementations are especially prone to bugs and breakages. I believe there is a number of reasons for this:

- Their requirements are often provided at the same time by Product, Marketing and Business teams, but the implementation is performed by Development teams. This cross-team scenario results in a domain and communication complexity that can lead to misunderstandings and comprehension gaps, which in turn lead to specification or implementation bugs.

- Similarly, the development cycle of tracking implementations is disconnected from the product feature development cycle because stakeholders, motivations, and planning do not necessarily align, and typically, analytics is done later and on top.

- The use of various different third-party tools for analytics and user tracking on both Web and Mobile platforms (iOS/Android) leads to a mix of technologies and APIs for different purposes, from classical page-based tagging with Google Analytics and Product Analytics (e.g. with Amplitude) to user session recording (e.g. Hotjar) or even error logging (e.g. Sentry). These all have to coexist with their own technologies, dependencies, versioning, etc. This makes maintaining any consistency among them and the data we collect through them a herculean task.

- We apply no testing to them!

There are a number of technologies on the market that address some of these issues. For example, solutions like Segment or AVO allow developers to use a single type-safe library to route their tracking to third-party tools and to define specifications on the events and properties they track.

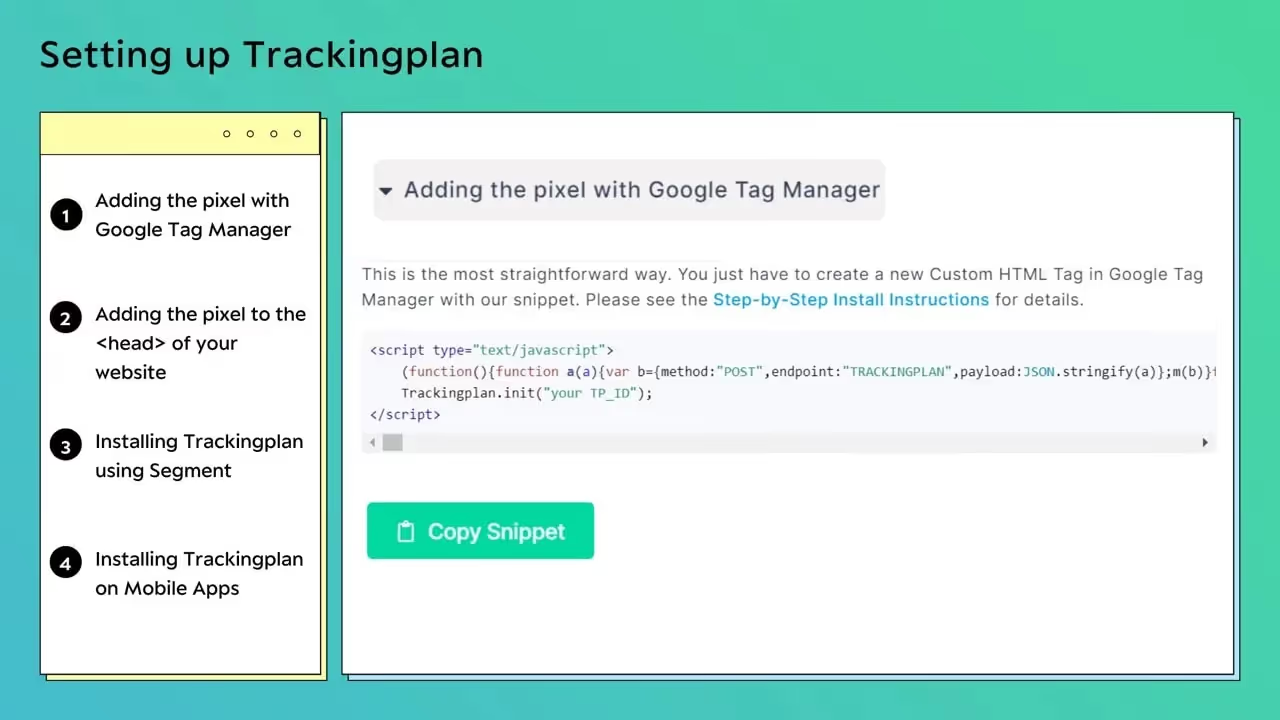

Trackingplan, on the other hand, is a no-code solution that, in contrast to Segment, AVO, and other current approaches, aims to address these challenges with minimal intervention by leveraging the testing automations you already have in place.

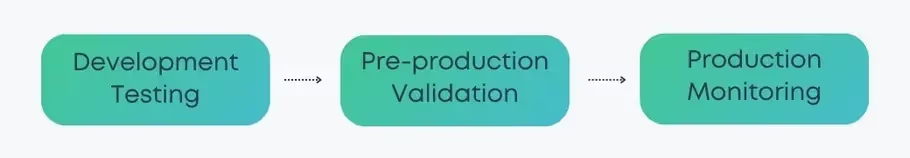

Production monitoring

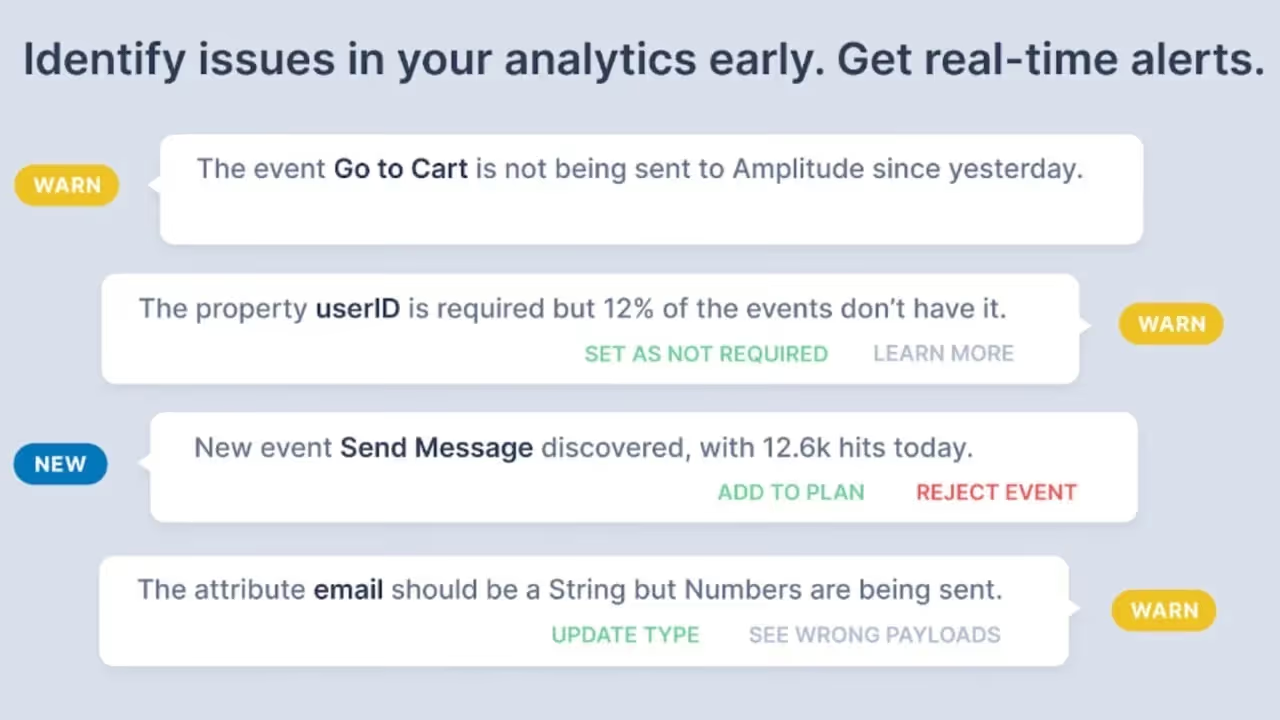

It starts from the right, by transparently monitoring the data that is sent to analytics and tracking destinations and detecting possible problems. Since our system learns the baselines automatically from your Web, iOS and Android Apps, it can detect drifts, anomalies, and gaps in both user traffic and data schemas out of the box. Specifications and thresholds can be adjusted manually or reset as required.

Pre-production validation

We then move to the left, integrating your staging and testing environments and comparing them to your baseline. This allows you to see the difference between one release and the next, detecting broken events or schemas before you release them. Any existing automated QA you have implemented, such as functional or non-functional regression testing (e.g. with Cypress), will stress your analytics under the watch of our system.

As a result, you can also cover the analytics service integrations in your existing release testing by simply integrating Trackingplan without changing your feature or testing code in any way.

Trackingplan’s results can be reviewed in real-time or through automated digests (e.g. reporting results on daily or weekly development cycles).

Development testing

See the bug before it is merged: In pre-merge time, developers or CI/CD pipelines (e.g. GitHub Actions) run unit tests and maybe a subset of functional tests on the code that is affected by their changes. If these include analytics code, Trackingplan validates the data specifications against the baseline and returns a report which can be used as an error or warning in your CI/CD pipeline.

Analytics and user tracking data is used to direct our product development and understand our users, measure how our business is doing, and perform experiments and predictions on markets and user behavior, among other things. It is clear that it is mission critical for any business, from small startups to large enterprises. However, its value is limited by undetected implementation bugs or errors that are fixed too late. It’s time we made our analytics a first-class citizen in our development process.

Alexandros Chaaraoui, CTO & Co-founder of Trackingplan

Trackingplan is applied by medium to large enterprises, like Typeform, Freepik or Travelperk to monitor and test their analytics and tracking integrations.

Get started today to experience the benefits of Trackingplan first-hand and automatically validate your Digital Analytics health.

For more information, you can always book a demo.

.avif)