How poor data quality negatively impacts your business

The direct results of poor data quality significantly impacts a company's governance and compliance processes, resulting in additional rework and delay. This facts produce a decline in income and increased financial costs, which leads to revenue loss business.

According to the DAMA (Data Management Body of Knowledge), numbers vary, but experts believe that organizations spend between 10% - 30% of sales on poor data quality issues. However, additional non-monetary direct and indirect costs are associated with poor data quality.

Data governance

Indirect expenses might be difficult to quantify, and you may not immediately realize the cost of poor data quality. However, it could be a longer-lasting effect, such as a ruined reputation. In addition, poor data quality can undermine evidence, foster mistrust between involved areas, and result in poor performing decision-making.

Decisions and policies based on facts are only as good as the data on which they are based. Missing or duplicating data could result in overcounting or undercounting, leading to bad decisions and unwanted effects.

Opportunities lost

Poor data quality can also result in losing crucial opportunities and service delivery problems. For instance, inaccurate or out-of-date data may lead to unneeded service provision in one location, whereas high-quality data may reveal more lucrative alternatives.

Poor data quality can prevent organizations from determining efficacy if money and resources are utilized optimally. Conversely, high-quality data can result in a more targeted organizational strategy, more efficient use of finances, and more operational efficiency.

Damage reputation risk

Poor data quality also creates reputational risk. It could involve unwanted media attention and GDPR difficulties, with data quality being a requirement of GDPR. In addition, you may contact the same person numerous times if you have duplicate information. This may result in perceptions of irritation and mistrust, as well as time and resource waste.

Incorrect or missing personal data may have significant consequences for the individual. For instance, they can miss critical deadlines or not receive necessary information. Inconsistent and unreliable data might make it challenging to determine what is correct. Users may therefore question the integrity of your data, which could lead to a loss of confidence in your company.

What are the most common data quality issues?

Data-driven enterprises rely on contemporary technology and AI to optimize their data assets. However, they constantly need help with data quality challenges. If you want technologies and software to function for you, you must maintain a keen focus on data quality. We will examine some of the most prevalent data quality challenges and how to address them.

Duplicate data

Today enterprises are assaulted with data from all angles, including local databases, cloud data lakes, and streaming data. Moreover, they may possess application and system silos. These sources are likely to include a significant amount of redundancy and overlap, which negatively affects the quality of your data. For example, duplicate contact information has a substantial impact on customer satisfaction. Some prospects may be approached multiple times, which is detrimental to marketing campaigns. It is more likely that analytical results will be distorted if duplicate data is present. It can also yield skewed ML models when used as training data.

Inaccurate data

Poor data quality in highly regulated industries, such as in healthcare, can even result in fatal consequences . Inaccurate data does not accurately depict the real world and cannot aid in planning the proper reaction. If your client data is inaccurate, your customized customer experiences and marketing activities will be unsuccessful.

Data inaccuracies can be attributed to multiple causes, including human error, data drift, and data degradation. Gartner estimates that approximately 3% of global data is lost every month, which is concerning. In addition, data quality can deteriorate with time, and data might lose its integrity as it travels across systems.

Ambiguous data

Even under rigorous oversight, errors can occur in massive databases or data lakes. This issue becomes more overpowering for high-speed data streaming. For example, column titles can be misleading, for formatting difficulties to exist, and spelling errors to be overlooked. Such ambiguous data can generate multiple reporting and analytics issues.

Hidden data

Most companies utilize only a part of their data, with the remainder potentially lost in data silos or dumped in data graveyards. For instance, client data accessible to sales may not be shared with customer service, so missing a potential to develop more accurate and comprehensive customer profiles. In addition, hidden data prevents the identification of chances of enhancing services, creating innovative products, and optimizing processes.

Inconsistent data

The duplicated information can exist across sources when working with several data sources. For example, there may be variations in formats, units, or even spelling. Inconsistent data might also be introduced during company mergers and migrations. If data inconsistencies are not reconciled frequently, they tend to accumulate and devalue the data. Because they need only reliable data to power their analytics, data-driven companies closely monitor data consistency.

Too much data

While we prioritize data-driven analytics and its benefits, data quantity does not seem to be a problem. However, it is. When searching for a detailed picture of your analytical initiatives, it is possible to become lost in a sea of information. Business users, data analysts, and data scientists invest 80% of their work in locating and preparing the correct data. Other data quality challenges get more serious as data volume increases, particularly for streaming data, big files, and databases.

Data downtime

Companies that are data-driven rely on data to drive their decisions and operations. However, there may be temporary intervals when their data are unreliable or unavailable (especially during events like M&A, reorganizations, infrastructure upgrades, and migrations). This data disruption can significantly affect businesses, including client complaints and poor analytical results. According to a survey, approximately 80% of a data engineer's effort is spent updating, maintaining, and ensuring the quality of the data pipeline.

The causes of data outages might range from schema modifications to migration challenges. In addition, the size and complexity of data pipelines can sometimes be daunting. Therefore, continuously monitoring data downtime and decreasing it with automated methods are critical.

How to spot poor data quality?

The input quality will dictate the output quality. It is almost difficult to generate accurate and trustworthy reports with inadequate, inconsistent, or corrupted data. Consequently, how can you determine if your company has a data quality issue? Here are some warning indicators that will indicate you may have poor data quality.

- Missing essential information

Though it's common for a dataset to miss information, the absence of crucial data causes numerous issues. Here are the three most important ones:

- The absence of data reduces statistical power, which reduces the probability of identifying an actual effect.

- The absence of data may introduce errors in the estimation parameters.

- If the essential information is missing, the predictive validity of the samples may be compromised.

- Menial tasks demand a great deal of effort and time

Your data is likely flawed if you spend most of your time on manual activities. For example, an inadequate data management plan may require you to manually organize data from multiple sources, contact people to fill in missing information, and enter the data into spreadsheets.

- Insufficient actionable insights

The conclusions generated from data that can instantly translate into action or response are actionable insights. Therefore, actionable insights must be pertinent, specific, and beneficial to the decision-maker. If your data confirms what you already know to be accurate, it is of minimal value and irrelevant.

- Insights are late in arriving

You must have immediate access to data stored in a centralized database. This enables you to generate reports quickly and easily, among other advantages. Reduced complexity, for instance, reduces errors and simplifies information access. In addition, when data is centralized, the entire organization works from the same blueprint and adheres to the same rules.

To spot poor data quality, you should frequently make data quality checks. This process starts by defining the quality measures, executing tests to identify quality concerns, and resolving the issues if the system supports them. Frequently, checks are defined at the attribute level to facilitate rapid testing and resolution.

Standard data quality tests consist of the following:

- Identifying duplicates and overlaps to evaluate their uniqueness.

- Checking for required fields, null values, and missing values to discover and solve missing information.

- Using format checks to ensure uniformity.

- Validity analysis of the range of values.

- Checking how recent the data is or when it was last updated determines its recency or freshness.

- Authenticity checks are performed on each row, column, value, and conformance.

The ways poor data quality is costing your business

Similar to technical debt, a high level of data debt will prevent you from achieving the ROI you anticipate. In addition, poor data quality reduces the efficacy of new technologies and processes, affecting your capacity to engage with customers, rely on analytics, and more.

It is believed that nearly a third of the data needs to be corrected. High levels of mistrust in data lead to a lack of data usage in your organization, negatively affecting strategic planning, key performance indicators, and business outcomes. In addition, lacking trust in data quality makes it difficult to gain support for particular projects, investments, and strategy revisions.

High-priority investments such as predictive analytics and artificial intelligence depend on high-quality data. Inaccurate, insufficient, or irrelevant data will lead to delays or a lack of return on investment. Unfortunately, this is a significant barrier to becoming data-driven, putting you at a competitive disadvantage. While companies say that data is one of their most significant strategic assets, inadequate data management puts your company in danger of breaching compliance standards, which might result in fines of hundreds of millions of dollars.

Ways to solve data quality issues

You can address the issue of poor data quality in various ways. Here are some solutions to data problems:

- Fix the source system's data. Frequently, poor data quality problems can be resolved simply by cleaning the original source. The phrase "garbage in, garbage out" is applicable in this scenario because if the source data are wrong or insufficient, the database will become corrupted and generate poor-quality results. Fixing data in the source system is frequently the most effective strategy to guarantee compelling client experiences and analysis after the process.

- Remediate the source system to resolve data issues. This strategy may sound similar to the first, but it operates differently. It is possible to configure the source system that collects data to cleanse data before it reaches the database automatically. It is better to configure your source system to automatically correct data errors, whether a website or another source. This situation is not totally "set it and forget it," but it comes close.

- Understand poor source data and resolve problems throughout the ETL phase. Before client data can be examined, it is usually extracted, transformed, and loaded (ETL). If you can correct data at this stage before it enters the database, you can eliminate various data quality issues.

Over the past decade, the business world has grown increasingly data-driven, and this trend is not expected to decrease anytime soon. You must therefore ensure high data quality and implement the appropriate data quality methods and tools.

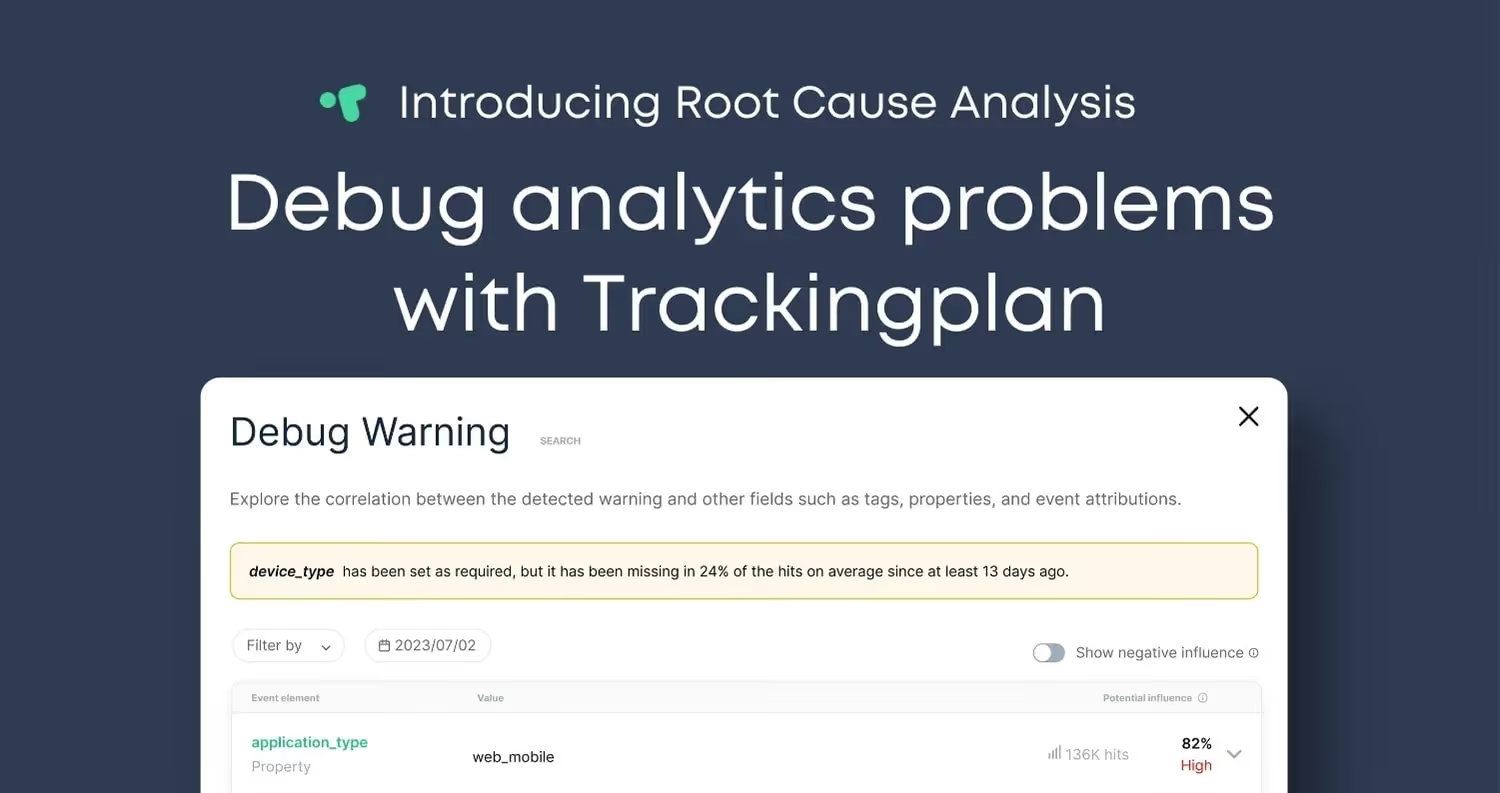

Trackingplan is a fully automated monitoring tool that finds problems in your analytics, marketing, attribution pixels, and campaigns and tells you how to fix them. This way, you can ensure the quality of your data and rely on it to guide your business.

Get started for free to avoid all small and hard-to-detect data issues that make your growth strategies fall apart, or feel free to book a demo.

%20copy%202.avif)