Data integrity: A quick definition

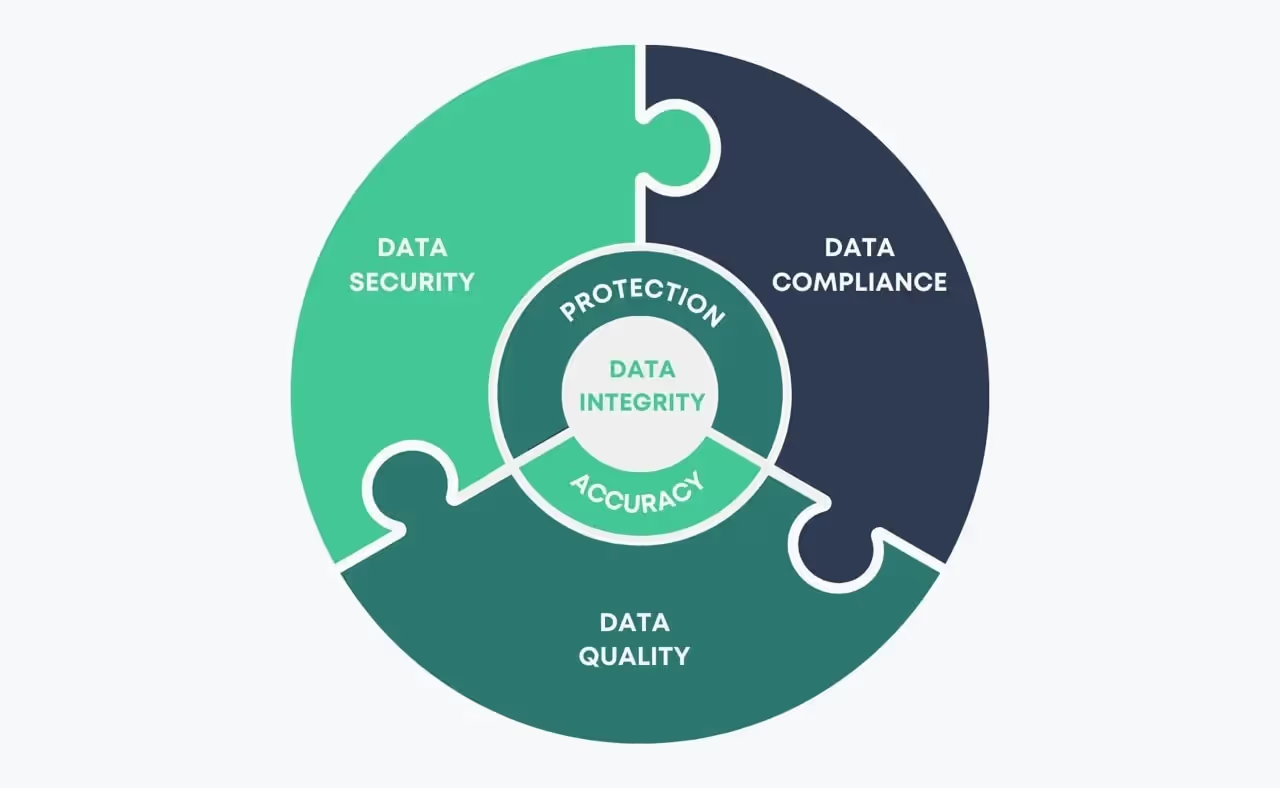

Data integrity refers to the accuracy, consistency, and reliability of data throughout its lifecycle. It ensures that information remains complete, unaltered, and trustworthy—whether it’s stored, processed, or transferred. Maintaining data integrity is essential for businesses to make informed decisions, comply with regulations, and protect against data loss or corruption.

Data integrity is maintained by a set of processes, rules, and standards with the objective to preserve the overall accuracy and data security in regard to regulatory compliance frameworks —such as the General Data Protection Regulation (GDPR) or the (CCPA).

In that sense, data integrity is like building a fortress around your company’s data, ensuring that it remains untampered and secure, reliable, and complete regardless of the passage of time or how often it is accessed.

Why is data integrity important?

Considering that a large number of today's businesses prosper through the delivery of digital products and services and that data has become all we have to understand our customer’s needs, there are several reasons that account for the importance of data integrity in protecting your business from data loss and outside forces.

Let’s have a look at how this manifests in today's digital era:

Business intelligence

The success behind any business decision strongly relies on the integrity of the data that has guided that decision. Organizations routinely make data-driven business decisions, but without data integrity, those decisions are doomed to fail, resulting in poor-performing decision-making or flawed outcomes. This explains why data integrity is found in the structure behind several successful data-driven enterprises.

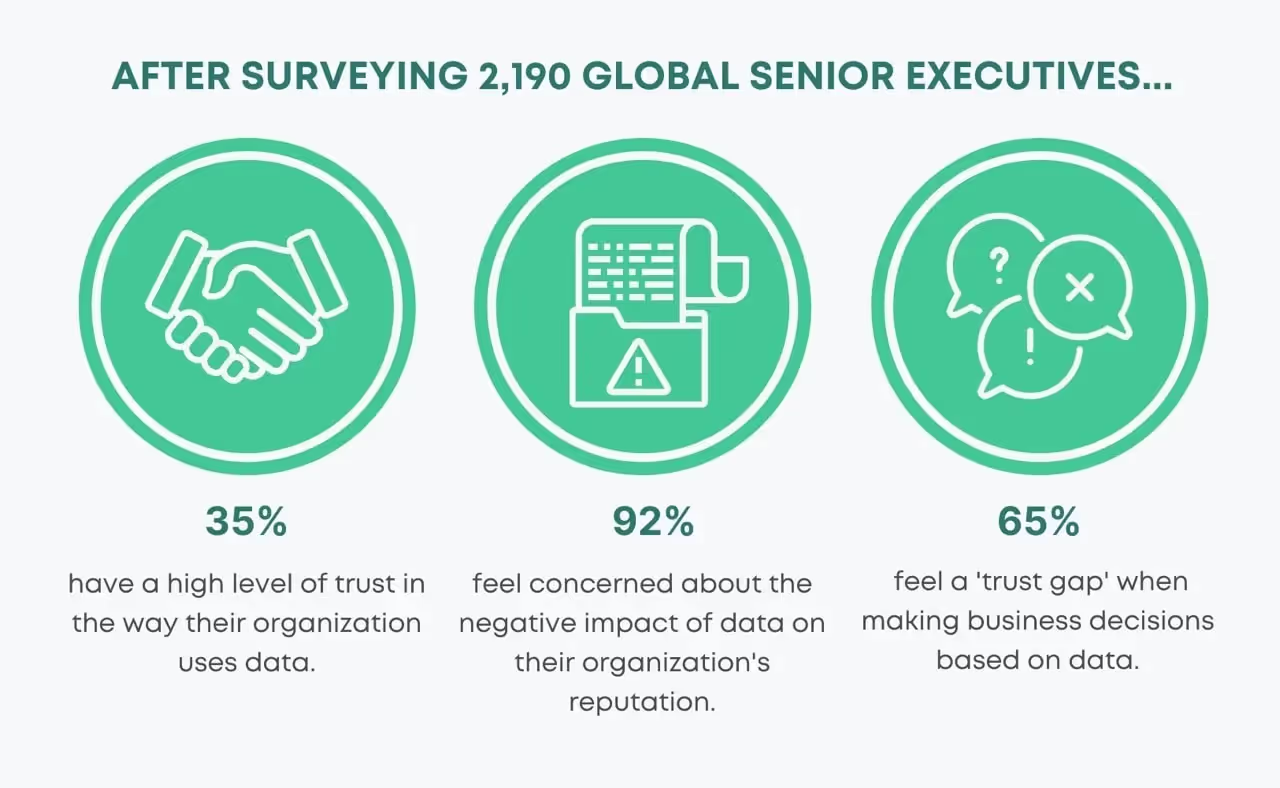

While this might sound obvious, as nobody imagines making extremely important business decisions based on inaccurate data, research reveals a bitter reality.

Indeed, after surveying 2,190 global senior executives, a recent report from KPMG International –a tax and advisory firm– shows that only 35% of senior executives have a high level of trust in the way their organization uses data analytics. That accounts for a total of 65% feeling a 'trust gap' when making business decisions based on their data.

Secure customer data

Digital businesses rely on customer data to enhance their experiences when using their product, allowing them to offer a better service in the long run. However, considering that this data can sometimes contain sensitive or personally identifiable data, here is where data integrity comes into play to help businesses create trustworthy ways to understand their customers and yet satisfy their need for protection.

Regulatory Compliance

Regulations demand accurate and consistent data for compliance reporting to ensure business processes are followed in accordance with prevailing industry standards and government regulations. Otherwise, businesses can be subjected to fines, legal penalties, or even longer-lasting unwanted effects, such as a ruined reputation.

In that sense, data integrity acts as a quality control measure to comply with data protection regulations by making sure data is accurate, secure, and fit for purpose.

Data integrity characteristics

Data integrity is characterized by a series of common characteristics. For it, the FDA has developed the acronym ALCOA+ to define some of the most important data integrity standards:

Attributable: Data is “attributable” when organizations know how data is created or obtained, and by whom.

Legible: Data is “legible” when organizations are able to clearly read and understand the data and the records are permanent.

Contemporaneous: Data is considered “contemporaneous” when organizations know how data appeared in its initial state and what happened to it throughout the different stages of its lifecycle.

Original: Data is “original” when this is preserved in its original state. For it, is its necessary to establish a process to make sure copies of an original record are distinguishable and traceable back to its original source.

Accurate: Data is “accurate” when it is correct, true, and follows the right form.

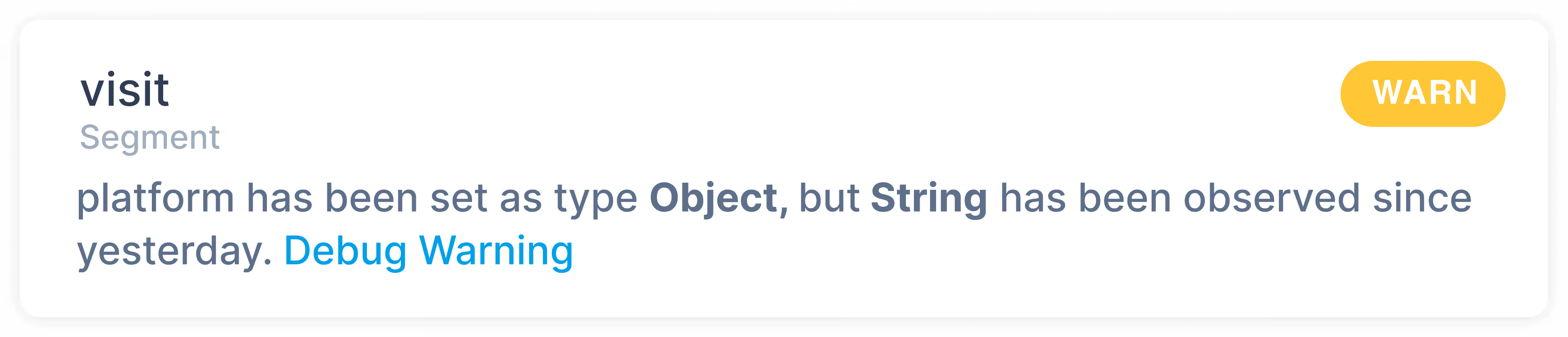

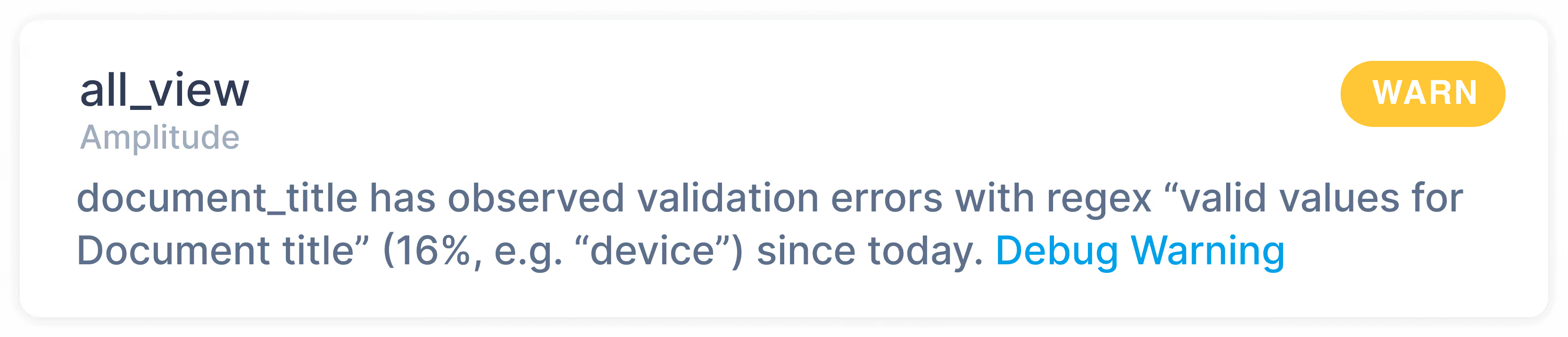

Trackingplan notifies you about type collision errors whenever it detects that your data does not conform to your type specifications. Moreover, you can also set up any kind of complex validation settings to be notified whenever your properties, events, or UTM parameters show validation errors.

Complete: Data is considered “complete” when all the required data elements are present and no elements needed are missing.

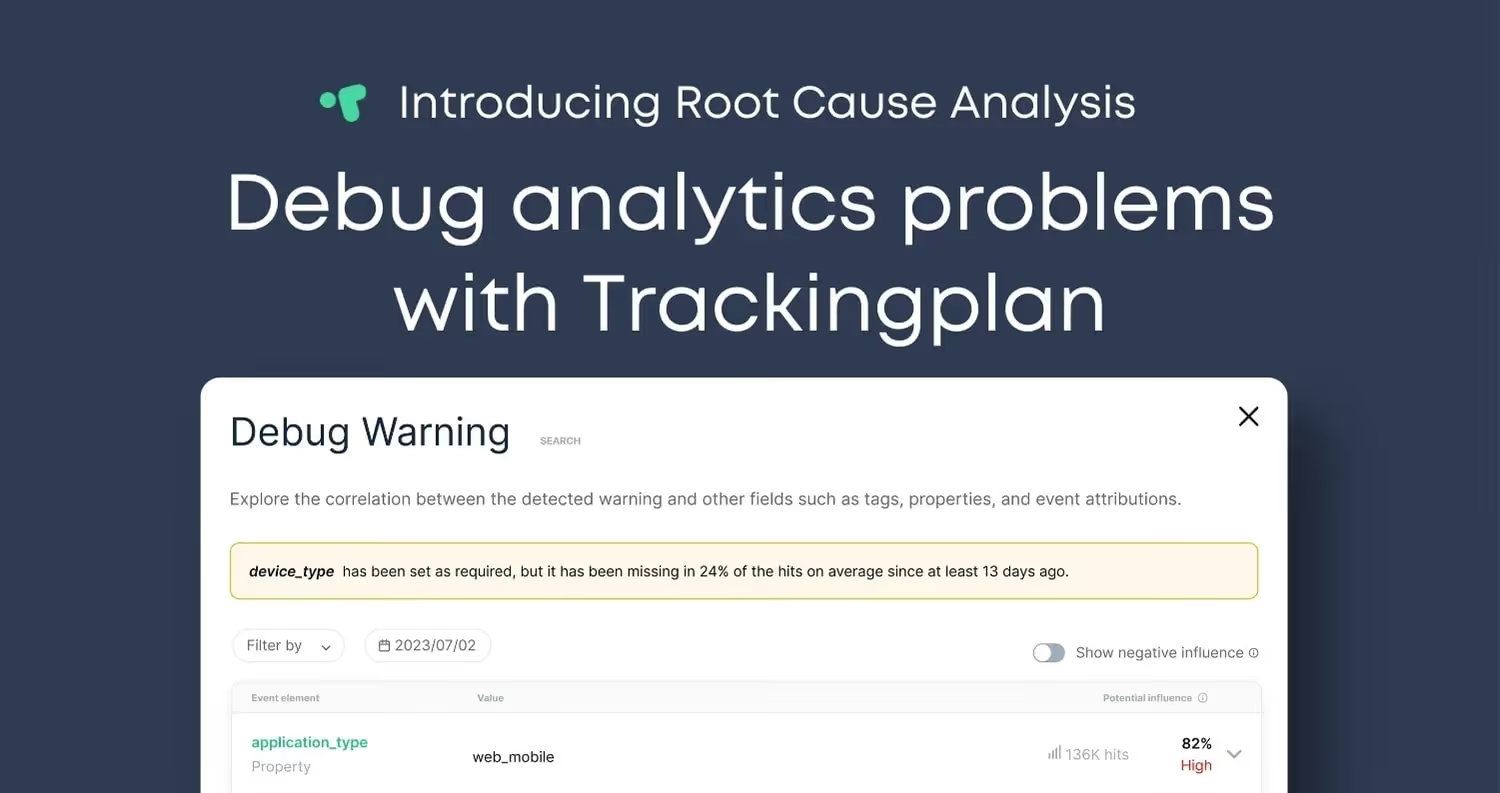

Trackingplan ensures your data always arrive according to your specifications and will automatically warn you if any of your required events, properties, or pixels are missing. Moreover, our Debug Warning View is all you need to discover the cause of your warnings and detect where they occur in record time.

Consistency: Data is “consistent” when is internally coherent and consistent across different sources. Yet, as businesses grow, it also does the increasing complexity of verifying whether this information conflicts with itself, as data is scattered and siloed across different applications and SaaS repositories.

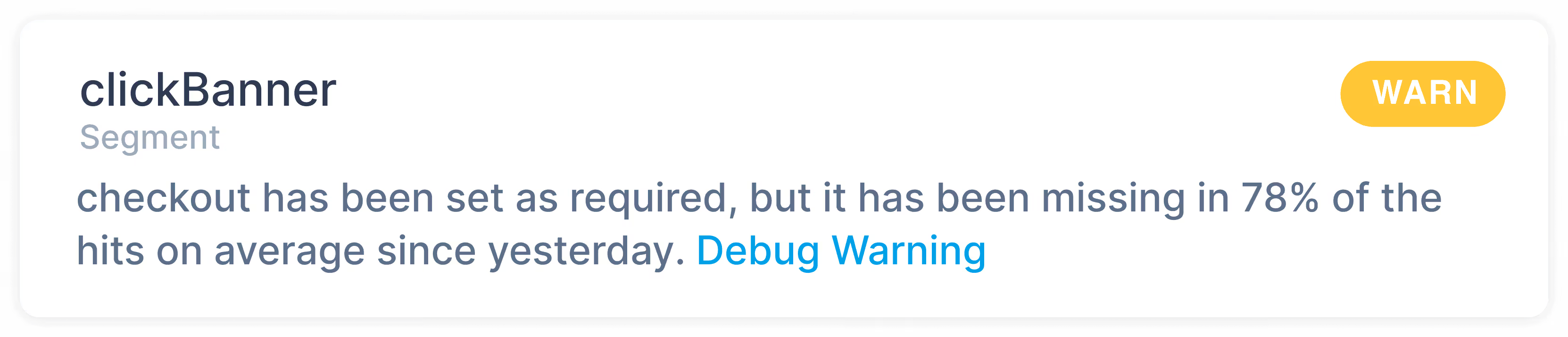

Trackingplan automatically connects all the data that flows between your sites and apps to third-party integrations in just one dashboard to provide you with powerful cross-service insights. This provides a roadmap for every member involved in the data collection process to ensure the data of your digital analytics, acquisition, pixels, and campaigns are accurately collected, responsibly managed, and integrated efficiently across teams and platforms.

Enduring: Remember the fortress that remains untampered and secure regardless of the passage of time or how often it is accessed? That’s what endurability is about. For it, specific steps – including putting in place robust and tested data backup systems as well as disaster recovery plans– should be taken.

Compliance: Data is "compliant" when it meets industry standards and government regulations, such as data privacy regulations or any other compliance standards.

Trackingplan’s Privacy Report allows you to see at a glance which private data your site is collecting from your users and forwarding to third parties. That way, personal data like user emails, IP addresses, SSNs, credit cards, and so on will be automatically spotted and labeled here to help you detect possible privacy issues or security-sensitive data that should not have been collected or forwarded to your analytics services.

Data Integrity vs. Data Quality

While some previous characteristics might resonate with the 6 core dimensions that can be used to measure and predict the accuracy of your Data Quality, data integrity should not be confused with data quality.

Of course, data quality is a crucial part of data integrity. Yet, data integrity encompasses every aspect of data quality and goes further by implementing a set of rules and processes that govern how data is entered, stored, and transferred.

In this sense, while data quality is a good starting point and both data quality and data integrity are crucial when taking data-driven decisions, data integrity elevates data’s level of usefulness to an organization and ultimately drives better business decisions by encompassing its whole life cycle.

Data integrity threats

From human errors, to transfer errors between two systems, to bugs and viruses, or compromised hardware, data integrity can be compromised in various ways, and its consequences can go from minor inconveniences to major business disasters depending on the amount of loss and the nature of the data affected.

Keeping data safe and protecting your company’s data integrity might seem like an overwhelming task. Nonetheless, error detection software can offer a modern alternative to mitigate these risks.

Trackingplan is a fully automated data QA and observability solution for your digital analytics created to ensure your data never breaks and always arrives to your specifications by automatically documenting all the data that your apps and websites are sending to third-party integrations like Google Analytics, Segment, or MixPanel.

This creates a single source of truth where all teams involved in first-party data collection can collaborate, automatically receive notifications when things change or break in your digital analytics, marketing automations, pixels, or campaigns, and easily debug any problem by being provided with the root cause of the problems affecting your data integrity.

Get started today to experience the benefits of Trackingplan first-hand and automatically validate your Digital Analytics health.

For more information, you can always book a demo.