An ab test platform is a specialized software tool that lets you run controlled experiments. It’s how you compare two or more versions of a webpage, app screen, or email to see which one performs better, taking the guesswork out of your digital strategy.

The platform provides the framework to test changes, gather data on user behavior, and make decisions based on statistical proof, not just opinions.

Why Your Business Needs an AB Test Platform

Think of it like a chef perfecting a new recipe. Does it need a pinch more salt? A different herb? Each small adjustment is tested to create the perfect dish. An ab test platform brings that same scientific rigor to your digital products. It’s your lab for moving beyond gut feelings and "we think" conversations to definitive, data-backed proof.

Instead of just hoping a new headline, button color, or page layout will work, you can run a controlled experiment. The platform shows one version (A) to a portion of your audience and the second version (B) to another. It then tracks how users interact with each to declare a statistical winner based on your goals, like boosting sign-ups or driving sales.

From Guesswork to Growth Engine

Without a structured testing process, decisions often come down to personal opinions or the loudest voice in the room—a risky and unpredictable way to operate. A dedicated platform completely changes this dynamic by turning every idea into a testable hypothesis. It gives you the tools to validate ideas with real user behavior, making sure you only invest in changes that actually improve the user experience and your key metrics.

This methodical approach is quickly becoming the standard for any competitive business. The A/B testing software market was valued at $517.9 million in 2021 and is projected to hit $1.916 billion by 2033. This growth shows a clear industry-wide shift toward data-driven optimization. You can read the full research about A/B testing market growth to see how this trend is shaping digital strategy.

An A/B test is only as good as the data it runs on. Running an experiment with flawed analytics is like trying to navigate with a broken compass—the direction it gives you is worthless.

Ultimately, an ab test platform is more than just a tool; it's an engine for continuous improvement. It builds a culture of learning where teams can fail fast, learn from mistakes, and consistently iterate toward better outcomes.

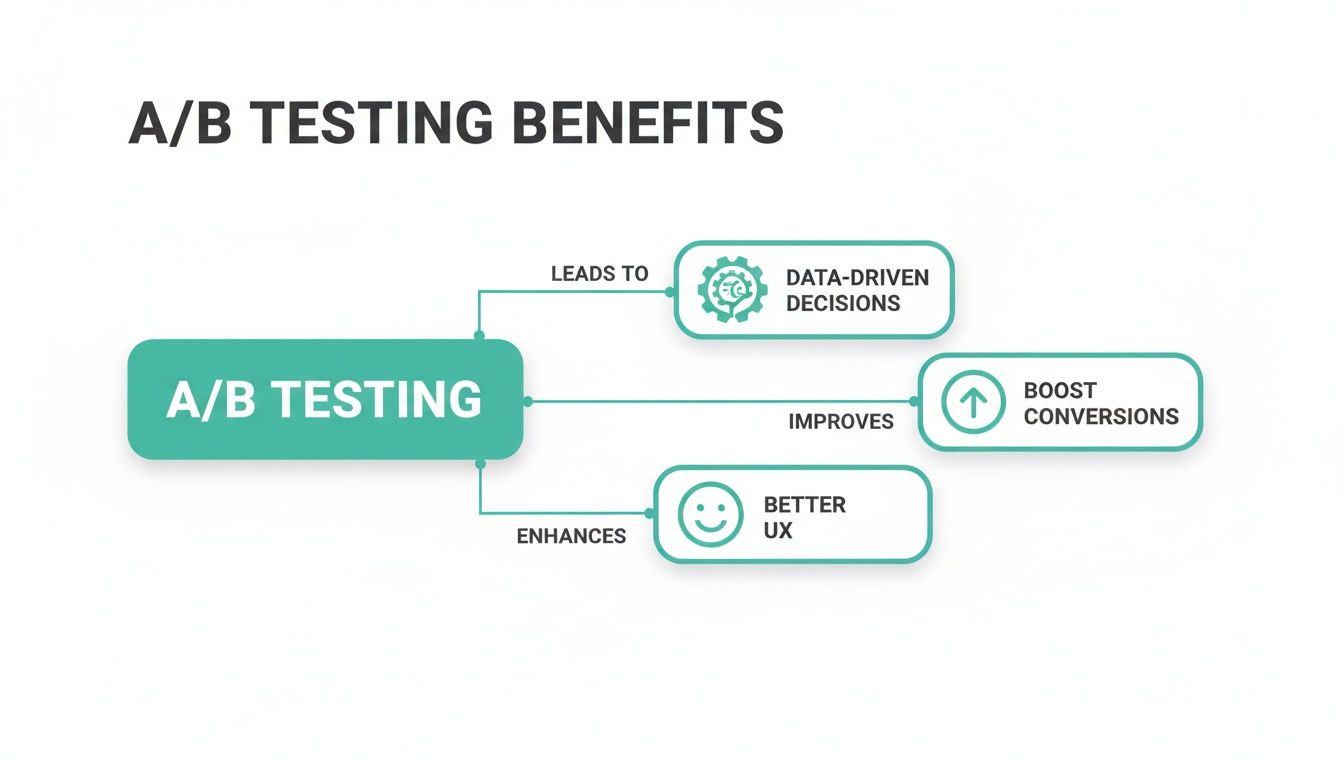

Key Benefits of Using an AB Test Platform

These platforms have become an essential part of any modern growth strategy because they deliver tangible business results. The table below highlights the core benefits you can expect.

By turning optimization into a systematic process, A/B testing platforms ensure your business is always moving in the right direction—toward growth.

Understanding Core Features and Platform Architectures

To really get a handle on what an A/B test platform can do, you have to look under the hood. These aren't just simple tools; they're sophisticated engines built to run complex experiments with precision. At their core, they’re designed around a set of essential features that give teams what they need to test, learn, and optimize without friction.

The best platforms manage to blend powerful functionality with a user-friendly interface. A handful of must-have features really form the backbone of any solid experimentation program.

Essential Platform Features

A great A/B testing platform does more than just split your traffic. It needs to support the entire experimentation workflow, from the initial idea all the way to the final analysis. Here are the key features to look for:

- Visual Editor: This is a game-changer for non-technical users like marketers and product managers. It lets you create and launch tests with a point-and-click interface, so you can change headlines, swap out images, or edit button text directly on the page without writing a single line of code.

- Advanced Audience Targeting: Not all your users are the same, and your tests shouldn't treat them that way. This feature lets you run experiments on specific segments based on their behavior, demographics, location, or device, which leads to much richer, more specific insights.

- Robust Reporting and Analytics: A powerful dashboard is non-negotiable. It should present results clearly, calculate statistical significance for you, and track the key performance indicators (KPIs) that matter most, like conversion rates, revenue per visitor, and average order value.

These core features are what directly drive the most important outcomes of A/B testing.

As you can see, the platform's features are what enable data-driven decisions, which in turn lead to higher conversions and a better all-around user experience.

Client-Side vs. Server-Side Architectures

Beneath the surface of any A/B test platform is one of two main architectures: client-side or server-side. Picking the right one is a crucial decision that hinges on your technical resources and what you’re trying to test.

Think of your website as a physical room. Client-side testing is like redecorating it—you can change the paint color, swap the furniture, and move pictures around. These changes happen right in the user's browser after the page loads, which makes it fast and easy for marketers to implement visual tweaks without needing a developer.

Server-side testing, on the other hand, is like doing a major renovation. Instead of just changing the decor, you're knocking down a wall to create an open-plan living space. These are deep, structural changes made on the web server before the page is even sent to the user's browser. This approach is perfect for testing complex features, pricing algorithms, or entirely new user flows.

Client-side testing is perfect for quick, visual experiments, while server-side testing is necessary for deep, functional changes that impact the core user experience or business logic.

The market is definitely shifting toward more flexible, cloud-based solutions. In fact, by 2026, web-based segments are projected to take up 49.60% of the total A/B testing software market revenue, mostly because they are so easy to get up and running. The entire market, valued at $1.5 billion in 2026, is expected to hit $4.4 billion by 2035, a growth curve that shows just how much businesses are moving away from rigid, on-premise setups. You can dig into more of this data on the future of the A/B testing market.

Getting a grip on these architectures and features is the first step toward making an informed choice. It ensures the platform you pick not only has the right tools for today but can also grow with you. To learn more about making sure your platform and analytics work together seamlessly, check out our guide on how to integrate with A/B testing tools.

How to Evaluate and Choose the Right AB Test Platform

Choosing the right ab test platform can feel overwhelming. The goal isn’t to pick the one with the longest feature list, but the one that actually fits your team’s skills, your existing tech stack, and what you’re trying to achieve as a business. Getting this right lays the groundwork for an experimentation program that produces results you can trust and act on.

A structured evaluation helps you look past the slick sales demos and focus on what will actually work for your day-to-day operations. This means scrutinizing everything from how a tool plays with your current systems to how easy it is for your team to get in and run a test.

Core Evaluation Criteria

When you start comparing platforms, it’s best to use a consistent checklist. This keeps you grounded in your team's real needs and prevents you from being swayed by a single flashy feature. Here are the must-haves to look for:

Ease of Use and Team Adoption: The best platform is one your team actually uses. Look for a clean user interface and a no-code visual editor for your marketing colleagues. The reporting dashboards should be clear and easy to grasp. If running a simple test requires a ton of training or constant developer help, it’s going to create bottlenecks and kill your testing speed.

Integration Capabilities: Your ab test platform doesn't operate in a silo. It needs to connect flawlessly with your analytics tools (like Google Analytics or Adobe Analytics), your CRM, and your data warehouse. This integration is the bedrock of trustworthy results, making sure data moves between systems without getting lost or messed up.

Scalability and Architecture: Think about where you'll be in a year or two. Does the platform handle both client-side and server-side testing? Client-side is perfect for quick visual tweaks, but you’ll want server-side power for testing deeper, more complex business logic as your program grows.

Support and Documentation: When a test breaks or you hit a technical snag, you need fast, knowledgeable support. Check out the quality of their customer service, how thorough their documentation is, and whether they have active community forums or a solid knowledge base.

Data Integration and Analytics Alignment

The link between your A/B testing tool and your main analytics platform is the single most important integration. If your experiment data doesn’t sync up with your core business metrics, you can't measure the real impact of your tests. This kind of data misalignment can lead you to roll out a "winner" that actually tanks key metrics like revenue or customer lifetime value.

A successful A/B test isn't just about a statistically significant lift; it's about a statistically significant lift in the metrics that matter to your business. Seamless data integration ensures you measure what's truly important, not just what's easy to track.

This is also why it's smart to have a good grasp of your entire analytics ecosystem. As you assess an ab test platform, it's helpful to explore a BI tools comparison to understand different ways of analyzing and reporting on data. This broader context helps you select a platform that slots neatly into your whole data strategy.

Client-Side vs. Server-Side Architecture

A major decision you'll face is the testing architecture: client-side or server-side. Client-side testing happens in the user's browser and is great for quick UI changes, while server-side testing happens on your server before the page is even sent to the browser, allowing for deeper, more complex experiments.

Understanding the differences is key to choosing a platform that can grow with you. Here’s a quick breakdown to help you decide what’s best for your needs.

Comparing Client-Side vs Server-Side Testing

While client-side offers speed and simplicity, server-side provides the power and reliability needed for a mature, large-scale experimentation program. Many teams end up needing both.

Ultimately, picking the right ab test platform is a strategic move. By focusing on usability, integration, scalability, and the right architecture, you’ll find a tool that not only solves today's problems but also helps you build a real culture of experimentation.

Why Data Quality Can Make or Break Your A/B Tests

An ab test platform is a powerful engine for growth, but it has one critical vulnerability: the quality of the data it runs on. Launching an experiment with flawed analytics is like navigating with a broken compass—the direction it points you in isn't just worthless, it's actively misleading. You might end up crowning a "winner" that's secretly hurting your business.

A/B testing is serious business. It powers decisions that turn small tweaks into major revenue. For performance marketers, a solid test can lift conversion rates by an average of 20-50%, and modern platforms are even using AI to shrink test cycles from weeks down to hours. With over 60% of traffic now coming from mobile, omnichannel testing is the next frontier. But none of this matters if the data underneath it all is junk. Discover more insights about A/B testing statistics on VWO.com.

This is why data integrity is a strategic imperative, not just a technical chore. Bad data leads you to invest in the wrong features, ignore real opportunities, and ultimately, erodes trust in your entire experimentation program.

Common Data Quality Pitfalls That Invalidate Results

Bad data is sneaky. It doesn't announce itself with a flashing red light; it silently corrupts your experiments, leading to skewed results and bad decisions. These subtle errors almost always trace back to tracking bugs that go unnoticed until the damage is done.

Here are a few of the usual suspects that can completely derail your A/B tests:

- Missing Events: A user makes a purchase or signs up, but the event never fires. Your A/B test platform never gets the memo, and the variation's true performance is totally misreported.

- Inconsistent Tagging: Your team rolls out a new campaign, but the UTM tags are a mess—some are inconsistent, others are missing entirely. The traffic can't be segmented correctly, making it impossible to analyze how different audiences are reacting to your test.

- Broken Tracking Implementations: A recent site update or app release accidentally breaks your analytics setup. Suddenly, events that worked perfectly are either gone or firing with the wrong properties, invalidating any test that relies on them.

Misunderstanding or incorrectly implementing attribution models can also throw a massive wrench in your A/B test results. If you can't accurately credit a conversion to the right touchpoint, you can't trust your experiment's outcome.

The Role of an Automated Analytics Safety Net

Manually auditing your tracking for these errors before every single experiment just isn't realistic. It's tedious, slow, and wide open to human error. What you really need is an automated guardian for your data—a system that constantly monitors your tracking to make sure the information flowing into your ab test platform is clean and reliable from the get-go.

This is exactly where a tool like Trackingplan comes in. Think of it as an automated QA platform, constantly watching over your entire analytics implementation.

Trackingplan doesn’t just find problems; it prevents them from ever compromising your experiments. It acts as a single source of truth, validating every piece of data before it reaches your testing tool, ensuring the integrity of your results.

By discovering and monitoring your analytics setup in real-time, it catches issues like missing events, property mismatches, and broken pixels the moment they happen. Instead of your team spending days debugging a failed test, they get an instant alert on Slack or email that pinpoints the root cause, so you can fix it immediately.

This proactive approach is the key to maintaining a high-velocity testing program. To dive deeper into this topic, check out our guide on data quality best practices. By safeguarding your data, you’re protecting the investment you’ve poured into your entire experimentation strategy.

Best Practices for Running Experiments That Work

Owning a great ab test platform is a bit like having a professional-grade kitchen—the magic isn't in the equipment, but in what you create with it. The most powerful tools are only as good as the experiments you run. This is what turns a platform from a simple piece of software into a continuous engine for business growth, but it requires discipline, not just random testing.

The journey starts with a clear plan. Instead of just throwing ideas at the wall to see what sticks, a structured experimentation roadmap helps you prioritize tests based on their potential impact versus the effort they require. This strategic approach ensures you’re always focused on changes that can actually move the needle on your most important metrics.

Crafting Strong, Testable Hypotheses

Every successful A/B test is built on a strong hypothesis. A good one is far more than a guess; it's a clear, testable statement that connects a proposed change to an expected outcome. It follows a simple but powerful formula: "If we change [X], then [Y] will happen, because [Z]."

For example, a weak hypothesis like, "Making the button bigger will get more clicks," is flimsy. A strong, testable version is: "If we increase the size and contrast of the 'Add to Cart' button, then we will see a 15% increase in add-to-cart actions because the button will be more visually prominent, guiding users' attention."

A well-crafted hypothesis forces you to articulate the "why" behind your test. This critical thinking is the first step in moving from random tweaks to strategic optimization that generates real, actionable insights.

This discipline ensures every experiment has a clear purpose and a measurable definition of success. Without it, you’re just changing things for the sake of change.

Avoiding Common Statistical Traps

Once an experiment is live, the urge to check the results every few hours is almost irresistible. But this is one of the most common—and damaging—mistakes in A/B testing. It's a statistical trap known as "peeking," and it can trick you into declaring a winner prematurely when the results are nothing more than random noise.

To run a valid test, you have to commit to the process:

- Determine a sample size and duration beforehand: Use a calculator to figure out how many users you need and how long the test should run to achieve statistical significance.

- Commit to the timeline: Don't stop the test early, even if one variation appears to be winning by a huge margin. Trust the math, not your gut.

- Wait for statistical significance: Only analyze the results after the test has concluded and reached the required confidence level, typically 95% or higher.

Another common pitfall is test collision. This happens when you run multiple experiments on the same page or user flow simultaneously, causing their effects to bleed into one another. For instance, a test on a headline might contaminate the results of a separate test on the call-to-action button just below it. A solid ab test platform with robust scheduling and audience management features can help prevent these collisions and protect the integrity of your results.

Segmenting Results for Deeper Insights

Declaring a winner is only half the battle. The real gold is often buried in the segments. An experiment might lose overall but turn out to be a massive winner with a specific audience, like new visitors, mobile users, or customers from a particular country.

By digging deeper, you can uncover insights you would have otherwise missed entirely. Always analyze how different variations perform across your key segments:

- Traffic source: Do users from paid search behave differently than those from organic social media?

- Device type: Is one version better for mobile users and another for desktop?

- User behavior: How do first-time visitors react compared to loyal, returning customers?

This level of analysis transforms a simple "win" or "loss" into a rich learning opportunity. It helps you understand your customers on a much deeper level, which is how you build a mature culture of experimentation that consistently fuels growth.

How Trackingplan Safeguards Your Experimentation Program

While a powerful ab test platform gives you the engine to run experiments, Trackingplan acts as its essential insurance policy. Think about it: even the most advanced testing tool is completely at the mercy of the data it receives. A single tracking error can quietly invalidate your results, leading you to make decisions based on flawed insights.

Trackingplan steps in by creating a single source of truth for your analytics. It ensures the data fueling your experiments is accurate and trustworthy right from the start.

This proactive oversight saves your team from the headache of discovering a critical test was corrupted by a tracking bug weeks after it already finished. Instead of spending days on manual, time-consuming audits, you get automated validation and governance. This protects the integrity of your results and, just as importantly, the investment you've poured into your experimentation strategy.

Preventing Corrupted Experiments with Real-Time Alerts

Imagine you’ve just launched a major A/B test on a redesigned checkout flow. Weeks later, you realize the "purchase" event wasn't firing correctly for one of the variations. By the time you spot the error, the data is useless, and all that valuable time and traffic have gone to waste. Trackingplan is built to stop this exact scenario.

It works by continuously monitoring your entire analytics setup in real time. The second an anomaly pops up—like a missing event, a broken pixel, or a property sending the wrong data type—it sends an instant alert to your team through Slack or email.

This means you can catch and fix tracking errors before they have a chance to poison a live experiment. This real-time safety net gives your team:

- Automated Validation: Continuously confirms that all your analytics events and properties are firing correctly, just as you defined in your tracking plan.

- Proactive Error Detection: Spots issues like missing tags, inconsistent UTM parameters, and schema deviations the moment they happen.

- Root-Cause Analysis: Gives developers the context they need—what broke, where it broke, and why—so they can fix issues fast without tedious manual debugging.

Trackingplan transforms your data quality process from a reactive, manual chore into a proactive, automated system. It ensures that the data flowing into your ab test platform is always clean, complete, and reliable.

Freeing Your Team to Focus on High-Impact Testing

Without automated governance, analytics QA easily becomes a major bottleneck. Teams burn countless hours manually checking tracking implementations before every single experiment, which slows down the whole testing cycle. This manual work isn't just inefficient; it's also prone to human error, leaving your experiments exposed.

By automating analytics governance, Trackingplan lifts this burden completely. It acts as a tireless watchdog, giving your team the confidence that their data is accurate without the manual grind. This newfound efficiency lets your analysts, marketers, and developers shift their focus away from debugging and back to what they do best:

- Designing creative, high-impact experiments.

- Analyzing clean results to uncover deep user insights.

- Building a culture of rapid, data-driven growth.

Ultimately, Trackingplan doesn’t replace your ab test platform; it makes it stronger. By guaranteeing the quality of your underlying data, it allows you to trust your results, make decisions with confidence, and get the maximum business value out of your entire experimentation program.

Frequently Asked Questions

When you’re diving into the world of A/B testing, a lot of questions pop up. It’s natural to want to get things right from the start to make sure your results are solid. Let's tackle some of the most common queries about using an ab test platform effectively.

When Should I Use Server-Side vs Client-Side Testing?

This is a classic question, and the answer comes down to what you’re trying to change. There's a time and place for both.

Go with Client-Side Testing for visual, front-end tweaks. Think simple changes to headlines, button colors, or swapping out an image. These tests are straightforward to implement with a visual editor because they run in the user's browser and don't touch your site's backend code.

Opt for Server-Side Testing when your experiment involves deeper, more complex logic. This is your go-to for things like testing a new pricing algorithm, overhauling a checkout flow, or rolling out a new feature to a small group of users. The changes are made on your server before the page even reaches the user, which guarantees a clean experience with zero flicker.

How Do I Calculate the Right Sample Size?

Figuring out your sample size before you launch is non-negotiable if you want statistically significant results. Too small, and your findings are just noise. Too big, and you're just wasting time and valuable traffic.

Most A/B testing platforms have calculators built right in, but they all hinge on three core inputs:

- Baseline Conversion Rate: This is the current performance of your original version (the control).

- Minimum Detectable Effect (MDE): What's the smallest lift you care about detecting? A smaller MDE means you'll need a much larger sample size to spot it reliably.

- Statistical Significance: This is your confidence level. 95% is the industry standard, meaning you're confident your result isn't due to random chance.

Plug those numbers in, and the calculator will tell you exactly how many visitors each variation needs to produce a trustworthy outcome.

An inconclusive A/B test is not a failure; it’s a learning opportunity. It often means your change didn't have a strong enough impact on user behavior, which is a valuable insight in itself.

What Should I Do with an Inconclusive Test Result?

It happens to everyone. You run a test, wait patiently, and the results come back without a clear winner. Don't get discouraged—this isn't a dead end. An inconclusive result just tells you that your variation didn't move the needle enough to be statistically better (or worse) than the control.

Instead of just tossing the idea, use it as a chance to dig deeper. First, segment your results. Did the variation perform better for a specific group, like new visitors or users on mobile? If you still don't see a lift, it's time to revisit your hypothesis. Was the change you proposed bold enough? This is valuable feedback that will help you formulate a stronger, more impactful hypothesis for your next experiment.

By ensuring the integrity of your data, Trackingplan gives you the confidence to run high-impact experiments and trust the results. Safeguard your entire experimentation program and learn more at https://trackingplan.com.