You launch a Meta campaign with solid creative, a clean offer, and a budget that should be enough to learn. A few days later, CPA is moving the wrong way, ROAS looks soft, and nothing in the ad account explains the drop clearly. In a lot of accounts, that problem starts below the campaign layer.

It starts in the event stream.

Event Match Quality is often treated as a reporting detail. It isn't. If Meta can't reliably connect your conversion events to real user profiles, the platform has weaker signals for bidding, attribution, and audience refinement. You can keep tweaking creative and targeting, but if the underlying event payload is thin, inconsistent, duplicated, or stripped by consent and browser limitations, you won't get stable optimization.

The fix isn't "send more data" as a vague instruction. To improve EMQ score, you need to inspect the full path from browser to server to destination, then remove the specific faults that lower match quality. That means auditing identifiers, checking deduplication, validating schemas, and watching your stack continuously instead of after performance falls.

Why Your Ad Performance Depends on Event Match Quality

A campaign can look healthy at launch and still drift off target once Meta starts optimizing against weak conversion signals. The ad account shows higher CPA, less stable attribution, and audience performance that is harder to explain. In many cases, the problem is not media strategy first. It is match quality.

Event Match Quality, or EMQ, is Meta's indicator of how effectively your events can be tied back to real users. Higher scores usually mean Meta can use your conversion data with more confidence for bidding, attribution, and retargeting. Lower scores usually point to a specific failure in the pipeline, such as missing identifiers, poor normalization, duplicate events, broken deduplication, or gaps between browser and server delivery.

That distinction matters because Meta does not optimize from intent alone. It optimizes from the event payload you send. If Purchase events arrive with weak identity signals, or if the browser version and server version disagree, the platform has less usable feedback. Results often show up as slower learning, noisier reporting, and weaker audience building.

What EMQ changes in practice

EMQ affects more than reporting hygiene. It shapes how much trust Meta can place in the events driving your campaigns.

A strong score on Purchase but a weak score on AddToCart usually means the middle of the funnel is losing identifiers before users reach checkout. A weak score across every event often points to broader implementation problems, such as consent handling, hashing mistakes, missing advanced matching fields, or inconsistent event IDs. Those are engineering problems with media consequences.

Teams working on creative, bids, and account structure should treat measurement quality as part of performance work, not as a separate cleanup project. That is also why broader effective PPC campaign strategies only go so far if the optimization signal underneath them is degraded.

Why browser-only tracking breaks down

The browser pixel still matters. It just cannot carry the full load anymore.

Client-side tracking loses data in predictable ways. Browsers restrict storage. Ad blockers suppress requests. Consent tools can block identifiers on some pages but not others. Front-end changes ship fast, and tracking often breaks subtly after a release. EMQ drops because the event stream becomes partial or inconsistent, not because the media team made a bad optimization choice.

That is why many teams add server-side delivery through CAPI. Done well, it improves signal resilience and fills gaps the browser misses. Done poorly, it creates a second source of bad data. Duplicate purchases, mismatched timestamps, inconsistent parameter mapping, and broken deduplication can hurt match quality instead of improving it. Trackingplan's guide to the FB Conversion API in 2026 is useful for this reason. It explains CAPI as an implementation and validation problem, not just a new pipe for events.

Low EMQ is rarely fixed by sending more fields everywhere and hoping the score rises. The reliable path is to find where identity data is being lost, where browser and server events diverge, and which event types are failing first. That is what makes EMQ a performance metric worth debugging, not just a number to monitor.

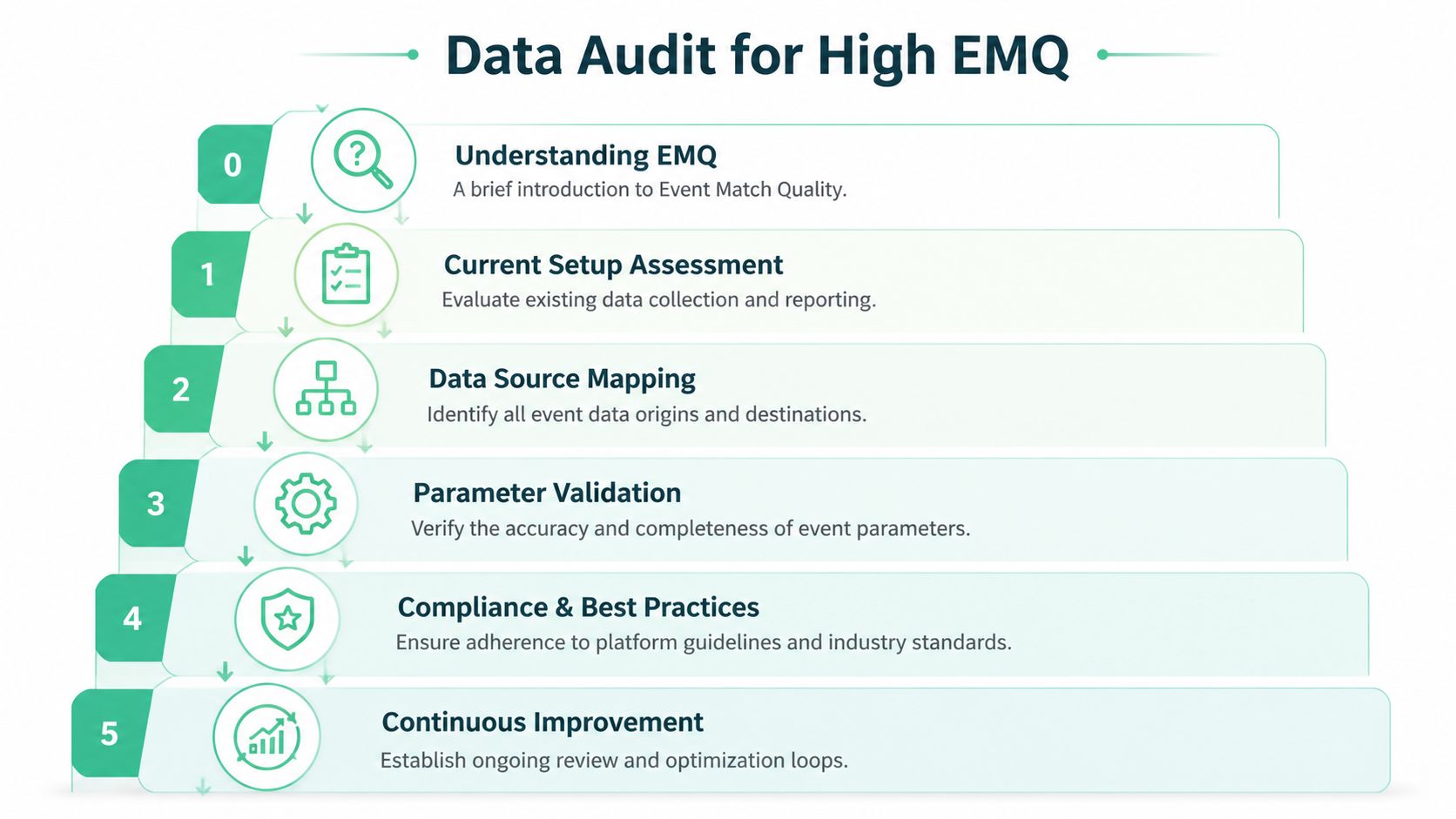

Auditing Your Data The Foundation of a High EMQ Score

An EMQ score drops after a site release, paid performance softens, and the first reaction is usually to add more fields or push harder on CAPI. That is how teams lose weeks. The faster path is to audit the pipeline and find the exact point where matchable data stops making it to Meta.

Real gains come from diagnosis, not guesswork. Teams that improve EMQ often see better efficiency because Meta can match more events to real users, but the score only improves when the underlying collection and mapping problems are fixed. An audit gives you that root-cause view.

Read the score correctly

A common mistake is to look at the aggregate EMQ score and assume the account is fine. EMQ has to be read by event type, because each event has different opportunities to capture identity.

PageView usually scores lower. Purchase usually scores higher because checkout collects stronger first-party data. If Purchase is healthy but AddToCart is weak, the issue is often upstream. Identity is being captured late, or it is not being persisted across the journey.

Use EMQ as a diagnostic signal.

A practical way to frame it:

| EMQ range | What it usually means | Practical implication |

|---|---|---|

| 9 to 10 | Strong identifier completeness and matching | Meta has better inputs for optimization |

| 7 and above | Good enough for efficient optimization in many setups | A realistic target for most teams |

| 0 to 4 | Clear data quality or delivery issues | Campaign efficiency usually suffers |

Audit by event, not just by pixel

Start in Events Manager, but do not stop at the top-line pixel view. Review Purchase, Lead, InitiateCheckout, AddToCart, and the custom events that drive bidding or reporting in your business.

What matters is the pattern across events and across delivery methods. If browser Purchase events carry strong identifiers but server Purchase events do not, that points to enrichment or mapping gaps. If one storefront sends clean AddToCart events and another does not, your schema is drifting between implementations. If Lead performs well on web and poorly in app, the handoff between SDK, backend, and Meta is the first place to inspect.

For a practical checklist focused on implementation gaps, use this Meta Conversions API audit guide.

Check the identifiers that actually move match quality

Teams waste time filling payloads with low-impact fields while the identifiers Meta relies on most are missing, malformed, or unavailable on key events.

Audit priority

High priority identifiers include Click ID and email.

Medium priority data includes phone numbers and browser identifiers.

Lower priority fields include things like name or postcode.

Trade-offs become apparent. A form can collect email, but if normalization is inconsistent between browser and server, the same user may look different in each payload. A click ID can exist on landing, but redirects, consent handling, or session resets can strip it before conversion. A phone number can be available at checkout, but many server-side pipelines never reuse it for downstream events.

A useful audit answers specific questions:

- Is email captured and passed where consent allows it? Problems often start with formatting, hashing, or sending different values from different systems.

- Is click ID preserved across the journey? If redirects or tag logic break it, Meta loses one of the strongest matching signals.

- Are phone values reused across events? Many stacks collect them once and never attach them again.

- Are

_fbpand_fbcpresent when expected? Missing cookie values can reduce matchability even when other fields exist. - Do browser and server events use the same user data and formatting rules? If not, EMQ often diverges by channel.

Map the full event path

Events Manager shows the outcome. It does not show where the failure started.

Trace each important event from collection to dispatch. Start at the form, checkout, login state, or app screen where the data originates. Then follow it through the data layer, tag manager, consent layer, browser pixel, server endpoint, middleware, CDP, and the final Meta payload. In many implementations, the break happens between systems. A field is renamed, dropped conditionally, hashed twice, or blocked on one path and not the other.

A useful audit map includes:

- Collection points such as forms, checkout, login, and app screens

- Identity persistence across sessions and touchpoints

- Transformation rules such as formatting, hashing, or field renaming

- Destination payloads sent to Meta

- Validation layer that confirms what shipped

If you cannot tell whether a missing identifier was never collected, removed by consent logic, renamed in middleware, or lost before dispatch, the issue is not just EMQ. The issue is poor observability.

What usually turns up

The findings are usually predictable. Field names differ between browser and server implementations. Old pixel logic survives a redesign. Checkout collects strong identifiers, but only the browser event receives them. Consent rules are stricter on one template than another. Deduplication is set up for Purchase but ignored for mid-funnel events.

None of that is glamorous. It does affect EMQ.

The goal of the audit is not perfection. The goal is to document where match quality breaks, why it breaks, and which system owns the fix. Once that is clear, you can improve EMQ at the source instead of sending more data and hoping the score rises.

Architecting Data Collection for Peak EMQ

A durable EMQ setup is built, not patched. If your collection model still depends mostly on browser execution, every blocker, cookie restriction, consent edge case, and front-end regression will chip away at match quality.

The practical architecture is usually a hybrid one. Keep the browser signal, because it captures user-side context and immediate interaction data. Add server-side delivery through CAPI, because it gives you a more resilient path for enriched identifiers and conversion events that shouldn't depend entirely on the browser.

Client-side versus server-side

This isn't a philosophical debate. Each method solves different problems.

| Approach | Where it helps | Where it breaks |

|---|---|---|

| Browser pixel | Immediate page and interaction events, simple deployment | Vulnerable to blockers, browser restrictions, front-end drift |

| Server-side CAPI | More resilient delivery, stronger control over enrichment and normalization | Needs proper mapping, consent handling, and deduplication |

| Hybrid setup | Redundancy plus better event quality when implemented well | Complexity rises fast if governance is weak |

The reason hybrid wins is coverage. The reason hybrid fails is inconsistency.

What actually improves matching

Implementing server-side tracking through CAPI and unifying sessions with first-party data can boost match rates by 25% to 40%, and configuring custom subdomains to set first-party _fbp and _fbc cookies correctly can add another 15% to 20% uplift in tracking accuracy, according to this practical guide to improving Meta EMQ.

Those gains don't come from "server-side" as a label. They come from three concrete improvements:

- Richer identifiers sent with conversion events

- Better session continuity across touchpoints

- Less dependence on browser execution for critical delivery

Build around first-party identity

The core design principle is simple. Collect identifiers where the user gives them to you, normalize them once, and reuse them consistently across relevant events.

That usually means:

- Form and checkout capture for email and phone

- Session stitching so identifiers gathered late in the journey can enrich earlier or parallel events where appropriate

- Consistent transport rules so browser and server paths don't use conflicting field names or formats

A lot of teams collect enough data to match well but fail to pass it through the whole chain. The issue isn't absence. It's fragmentation.

Practical rule: If the checkout captured email but your server-side Purchase event doesn't include the normalized hashed value, your stack collected identity data without converting it into match quality.

Hashing and parameter discipline

For user-submitted identifiers, hashing isn't optional. It needs to be handled consistently and predictably. The common rule is to normalize values first, then hash them using SHA-256 before transmission where required.

At the same time, not everything should be hashed. Technical values such as browser and click identifiers must remain usable as identifiers in the payload. Teams often hurt EMQ by treating every field the same.

A clean implementation standard usually includes:

Normalize before hashing

Lowercase, trim, and standardize user-entered values before hashing.Separate identity fields from technical identifiers

Email and phone follow one process. Browser and click IDs follow another.Use the same logic everywhere

If web and server hash or format fields differently, event quality drifts.Document the schema

The biggest EMQ regressions often happen after a redesign or app release because the old schema was tribal knowledge.

Preserve _fbp and _fbc correctly

These fields often get treated as implementation trivia. They aren't. They are part of the practical matching layer.

If your setup uses a custom subdomain and maintains first-party cookie behavior correctly, those identifiers are more likely to survive browser friction and be available to the server-side event stream. If they disappear between landing page and conversion, match quality suffers even when email capture is strong.

Redundancy is useful only when the events align

A hybrid design should produce one coherent event model. That means:

- the same event names across browser and server

- the same logical definitions for Purchase, Lead, and checkout milestones

- aligned user fields

- reliable event deduplication

If browser emits PurchaseComplete while server emits OrderPlaced, you've made your reporting and matching harder for no gain. Same action, same business meaning, same identifiers. Keep it unified.

For teams planning the broader implementation, Trackingplan's server-side tracking overview is a practical starting point because it treats server-side as part of data governance rather than a standalone deployment task.

What doesn't work

Some fixes sound sensible but rarely move EMQ much.

- Adding low-priority fields first. Names and postcodes can help fill gaps, but they won't compensate for missing anchors like email or click ID.

- Chasing perfect 10s on every event. Some event types naturally carry less identity data.

- Treating CAPI as a copy of the pixel. If server-side just repeats low-quality browser data, it adds complexity without much value.

- Ignoring consent and validation design. A technically complete architecture can still fail if the consent layer strips fields unpredictably or if no one checks payload quality after release.

The best EMQ architecture isn't the most elaborate. It's the one that keeps identifiers consistent, preserves first-party context, and sends trustworthy events every time.

Diagnosing and Fixing Common EMQ Killers

Once the architecture is in place, the hard part becomes operational. EMQ usually drops because one of a few recurring faults enters the stack undetected, then spreads across campaigns before anyone notices.

The three most common killers are duplicates, schema mismatches, and privacy or consent mistakes.

Without proper validation, duplicate events from parallel client-side and server-side stacks can increase CPA by as much as 18%. Unaligned schemas between web and app events in hybrid setups can create a 1 to 2 point variance in EMQ, and PII leaks can trigger privacy flags that harm match quality, as noted in this guide on improving Event Match Quality.

Duplicate events

This is the classic hybrid-stack failure. The browser pixel fires a Purchase, the server sends the same Purchase, but the two events don't share the right deduplication logic. Meta receives both as separate conversions.

Symptoms usually show up as inflated counts, unstable CPA, and suspicious differences between platform reporting and backend sales records.

Common root causes include:

- Missing shared event identifiers

- Different event naming between browser and server

- Retries or queue replays that create fresh event identities

- Parallel implementations owned by different teams

Fixing it means treating deduplication as a product requirement, not an implementation detail. Browser and server need a shared event definition and a shared deduplication key for the same real-world action.

When duplicate events exist, the issue isn't just overcounting. The bidding system also learns from distorted conversion volume.

Schema mismatches across web, app, and server

This issue gets worse as stacks mature. Web events often evolve one way, app events another, and server-side integrations become a third dialect.

The names may look close enough for dashboards, but the payloads aren't aligned. One version passes email and click ID. Another sends only device-level context. A third renames fields during middleware processing. EMQ becomes uneven because Meta sees related actions as different-quality events.

A practical fix looks like this:

| Problem | Typical cause | Fix |

|---|---|---|

| Different event names for the same action | Separate teams or tools define events independently | Create one canonical event dictionary |

| Identifier gaps by platform | App, web, and server collect different fields | Define minimum required identifiers per event |

| Field renaming during transport | Middleware or CDP mappings diverge | Validate payloads at each hop |

Consent misconfiguration and privacy errors

Consent tooling can lower EMQ even when the rest of the implementation is solid. The most common failure isn't lack of consent support. It's inconsistent consent behavior across browser, app, and server paths.

Examples include browser events suppressing identifiers while server events still send them, or vice versa. Another problem is overzealous stripping, where consent logic removes fields that should remain available in allowed scenarios. And the worst version is sending PII in the wrong form, which creates compliance risk and can hurt match quality.

What to check:

- Whether the CMP applies the same rules across all data paths

- Whether allowed identifiers are being normalized and handled correctly

- Whether blocked identifiers are completely blocked, not just hidden in one layer

- Whether server events inherit consent state correctly

Why manual debugging breaks down

You can find some of these issues by sampling payloads in browser tools and comparing logs manually. That works for a one-time investigation. It doesn't work well for active stacks with multiple releases, agencies, app versions, and server-side transformations.

By the time someone notices a reporting discrepancy, the underlying fault may have been live for days. EMQ debugging gets easier when you can see event drift, parameter loss, and rogue changes as they happen instead of reconstructing them later.

Proactive Monitoring with Trackingplan

EMQ isn't typically lost due to a lack of theoretical knowledge. It's lost because the stack changes constantly. A checkout tweak removes a field. A mobile release renames an event. A server-side connector retries badly. A consent banner update strips identifiers from one path but not another.

That's why point-in-time audits aren't enough.

EMQ is calculated from recent 48-hour event data, but score changes are best judged over 7-day windows for stability, and real-time monitoring helps detect rogue events and PII leaks before they create longer optimization problems, as discussed in this Trackingplan video on Event Match Quality and monitoring.

Manual audits fail for a simple reason

They are too slow for modern analytics stacks.

A manual review can tell you what was true when you checked. It won't tell you what changed the next morning after a deploy, a template update, or an SDK release. That gap matters because EMQ isn't a static configuration state. It's a live output of your implementation quality.

Here is the operational difference:

Manual audit

Useful for baseline reviews and major investigations. Weak for ongoing control.Continuous monitoring

Useful for catching missing identifiers, broken pixels, schema drift, traffic anomalies, and consent-related changes as they happen.

What to monitor continuously

If the goal is to improve EMQ score and keep it there, monitor the failure modes, not just the score itself.

That usually means watching for:

- Missing events that should appear after key user actions

- Rogue events introduced by new code or tag manager changes

- Property mismatches where expected identifiers disappear or change type

- Campaign tagging issues that complicate attribution review

- Potential PII leaks that create privacy risk and data quality problems

- Differences across web, app, and server destinations

The point isn't to create more dashboards. It's to shorten the time between a tracking error appearing and someone fixing it.

For teams comparing tooling options, how Trackingplan works gives a useful picture of this category: automated discovery of implementations, real-time monitoring of analytics and marketing pixels, and alerts through collaboration channels when something breaks.

Why observability changes EMQ work

An observability layer gives analysts and engineers a shared source of truth. Instead of arguing whether the bug started in the data layer, the tag manager, the app SDK, or the server endpoint, the team can inspect what changed.

That matters even more in multi-team environments. Marketing owns campaign outcomes. Engineering owns releases. Analytics owns definitions. Agencies may own part of implementation. Without a common record of tracking behavior, EMQ issues turn into slow cross-functional investigations.

A platform such as Trackingplan is useful here because it automatically discovers martech implementations across web, app, and server-side stacks, monitors analytics and attribution pixels, flags schema mismatches, rogue events, missing pixels, UTM errors, consent issues, and potential PII leaks, and alerts teams through email, Slack, or Microsoft Teams. That makes it easier to manage the operational complexity that causes EMQ drift.

A short walkthrough helps make that concrete:

What a good monitoring process looks like

The healthiest teams don't treat EMQ as one person's metric. They define ownership by failure type.

Good governance means marketing can spot the impact, analytics can verify the payload, and engineering can fix the source without debating whose dashboard is "right."

A working process usually includes:

- A canonical tracking plan so event definitions don't drift

- Automated alerts for missing or malformed events

- A release check for web, app, and server changes

- A privacy review for identifier handling and consent behavior

- A weekly quality review that looks at event stability, not just ad outcomes

When that process is in place, EMQ becomes much easier to maintain. Problems still happen. They just stop staying hidden long enough to drag campaign performance with them.

Conclusion From Reactive Fixes to Proactive Governance

If you want to improve EMQ score, the core job isn't adding random fields until Meta shows a nicer number. The job is making your event pipeline trustworthy.

That starts with an audit. You identify which events are weak, which identifiers are absent, and where data is being lost. Then you fix the collection architecture so browser and server events work together, first-party identifiers are handled correctly, and event definitions stay consistent across systems.

After that, the work shifts from implementation to governance.

The biggest mistake teams make is treating EMQ as a one-time repair. It isn't. Front ends change, apps ship updates, consent logic evolves, and server-side mappings drift. Without continuous monitoring, the same faults return in slightly different forms and campaign efficiency slips again before anyone connects the problem to tracking.

The upside is real. Better event quality gives Meta stronger signals for matching, attribution, and optimization. That's how lower CPA and higher ROAS become achievable without relying only on creative changes or broader targeting guesses. Cleaner data doesn't replace media strategy. It makes media strategy perform as intended.

EMQ is one of the clearest places where analytics engineering directly affects revenue. When the event stream is accurate, complete, deduplicated, and governed, the ad platform can do its job. When it isn't, the account pays for the gap.

Treat EMQ as an operating discipline. Audit thoroughly. Build carefully. Validate continuously. That's how tracking stops being a hidden source of waste and starts becoming an asset your growth team can trust.

If you need a practical way to keep analytics, attribution, and martech implementations under control, Trackingplan helps teams monitor web, app, and server-side tracking continuously, catch schema drift and broken events early, and fix data quality issues before they affect campaign performance.